Fighting fake news: we need to build the tools to manage a world where AI has destroyed the truth

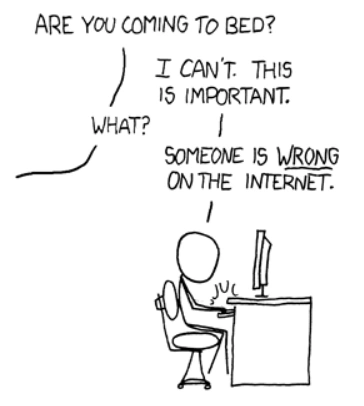

One of my favourite hobbies is ruining other people’s fun.

Because I spend a lot of time on Twitter, over the years it has become an increasingly annoying part of my schtick that if everyone is talking about something that isn’t true, I’ll be first in the queue to call bullshit on it.

There’s nothing I enjoy more than digging into the PDF to read what the controversial policy actually says, or spending five minutes figuring out the broader context for the outrageous thing the politician said, just so I can say “actually, it’s more complicated than that”. It’s all because of my long-held view that we should try to post things that are true – not things that we want to be true.

So as you might imagine, I’m an incredibly popular person. And when I asked my partner if I’m like this in real life too, she just looked at her feet and sighed heavily, for some reason.

But the truth is that, deep down, I know I’m fighting a losing battle against the nonsense and the lies.

The reality is that I’m never going to be able to fact-check the entire internet. Worse still, on a psychological level, our brains are wired so that we don’t actually care all that much about the truth: we treat things that our friends and allies say more credulously, and approach the utterances of our opponents with scepticism and suspicion.

That’s why I want to die inside when I tell someone the damning quote they’re sharing isn’t real, or is missing context, and they respond by saying: “I don’t care if it’s fake, it’s funny… and besides, it feels like it could be true.”

But my problems don’t really matter. My ordeals in being a pedantic asshole on the internet are only foreshadowing what’s coming. As just over the horizon is a tsunami of misinformation, fakeries and lies.

The AI tsunami

I’m talking, of course, about generative AI. We’re seeing on an almost daily basis technological leaps that would have seemed like magic just a few short years ago.

Take AI image generation. In mere months, the technology has leapt from fuzzy DALL-E, unable to render faces properly, to the incredible capabilities of Midjourney 5, which can generate near photo-realistic images of pretty much anything.

It seems like a lifetime ago now, but it was only back in January we were sneering at AI for not being able to get fingers right. But it’s safe to say that those takes did not age well.

There is no sign of the rapid pace of improvements slowing down. I’m sure in the not-too-distant future, we’ll start seeing similarly impressive videos – especially as AI techniques are slowly getting better at creating consistent characters across multiple generations.

So what we’re living through is a Cambrian explosion in AI capabilities. Although it’s exciting to consider the new creative possibilities, it’s scary too. Because the internet is about to be flooded with fake images, text and – eventually – video and audio, at a new order of magnitude to what we’re currently used to.

Worse still, there’s nothing we can do to stop it – because the genie is already out of the bottle.

Why? Because even if the big AI platforms were to close down, become heavily regulated, or otherwise restrict how they are used, it won’t stop the AI bullshit tsunami. Even if the AI worriers get the “pause” they want, it is too late. Because generative AI doesn’t require a super-computer of specialist equipment. It just runs on the regular, normal computers that we all have on our desks, in our laps, and in our pockets.

I’ve seen this myself. I was able to download Stable Diffusion (it was a little over 4GB), an open source AI image generator, to my Mac and run it locally – no cloud required. It’s only a matter of time until we have apps that make generating realistic images as easy as posting on Instagram or composing a tweet.

The tools are already getting easier to use. Literally as I was in the midst of writing this essay, Adobe released a beta version of Photoshop that has built-in image generation powered by its own Firefly generative AI model.

So it’s inevitable that generative AI capabilities will become accessible to anyone who wants them.

Scale is critical. You could argue that you don’t need AI to spread nonsense online. We humans are already pretty good at it ourselves, and often all you need is to see a photo of a politician out of context, or even just confidently assert something without evidence, to see retweets and likes racking up.

But this is why I think AI is at risk of drowning us: Because it makes fakery even easier. A photo is more compelling than a tweet – and simply seeing it glide past as you scroll a news feed is going to make it more believable, as you won’t be forensically inspecting everything you see.

This scale could have new, emergent, downside consequences for our information ecosystem. If you can’t quite picture it, consider how in the 1990s it was technically possible to make your own website, but it was also hard to do. But it was only with the arrival of social media that posting online became easy – as a consequence, many more people do it, and because of that scale, a number of new threats, problems and challenges emerged that still cause problems today.

That’s why the tsunami of fakeries is inevitable. It’s like inventing nuclear weapons in a world where every household already has a stash of uranium piled up in their garden. Sure, some people might choose to build a nuclear power generator – but some people might have more dangerous ideas.

Selling shovels to gold miners

So far I’ve painted quite a dark picture of the future, not least for the perma-migraine I’m going to have screaming at everyone for posting so much nonsense.

In fact, I’m actually pretty bullish about the good that generative AI can do too. The negative consequences will need managing, but I think this provides a potentially lucrative opportunity for important new businesses and platforms.

So let’s say you buy my thesis, that generative AI is about to flood our information ecosystem with AI-generated content at a scale we haven’t seen before. Imagine what that looks like in a few years’ time: how will we trust anything we see online?

From an impressive viral dance move, to footage from the frontlines in Ukraine, it won’t be easy for journalists – let alone news consumers – to know what is real, and what was the result of a few taps on an app.

If you thought the 2020 US election was fraught and contentious, just wait until every gaffe is dismissed as a deepfake, and social media is flooded with ultra-realistic looking AI forgeries to undermine political opponents.

The only way we’re going to be able to make sense of what we see is if there is an entire ecosystem of institutions, organisations, and APIs to help information consumers sort the facts from the fiction. And the companies that build them are inevitably going to be valuable and important.

For example, an obvious way to help identify real images would be for social networks such as Facebook and Twitter to display a little “verified” tick against images that have been confirmed as authentic. eBay, AirBnB, or dating apps could find such functionality useful too – perhaps performing a quick check when a new image is uploaded to restrict the fakes. And maybe we’d even want the “Photos” app on our phones to determine whether the photo we saved was real too.

The technical fight against fake news

Technically, this shouldn’t be too difficult to implement. A cryptographic technique known as “hashing” has been widely used for a long time by authorities and platforms for identifying child abuse and terrorist content. Basically, some clever maths is applied to an image to create a key that uniquely refers to it – and if you ‘hash’ the same image twice, you get the same key. So you can identify any given image, without needing to store the original.

The tricky part for the torrent of AI imagery is going to be maintaining the database of authenticated images. This is where I see a huge opportunity to sell shovels during a gold rush.

It’s unlikely that there will ever be one, single, canonical database – but I can envisage a situation where there are multiple, different databases that add up to a less chaotic information environment.

As such, I see three main types of authentication tool:

Trusted Databases will use the weight of their brand and reputation to convey their authority when determining the authenticity of an image. It’s a role that ‘legacy’ organisations with decades of credibility could perform well in.

For example, perhaps Getty Images or even news organisations such as the Press Association or the BBC could build-out publicly accessible databases of authenticated photos. If a photo is listed as real here, then you can trust that it is real – just as if something is reported by the BBC, you can broadly assume that due diligence has been done and that the facts are accurate. In exactly the way that you can’t if you read the same information on some random Twitter account or blog.

Crucially, what could make them work effectively – and provide new revenue streams – is that they could go beyond simply photos they own or have licensed, and could generalise their authentication credibility to images across the web. This is because, as per the above, they wouldn’t need to actually save or host copies of images (which would be a copyright and licensing nightmare) – they can simply store the hash, for authentication purposes.

The second type of tool is what I’m calling Provenance Scrapers, which would work more like Google, spidering across the web and scraping through every webpage, looking for images. Using the information gathered, these scrapers could attempt to determine the provenance of any given image by looking for the earliest possible sighting of it on the web, and allow individual images to be traced around to see where they came from – like a more sophisticated version of a reverse image search.

Trusted Hardware would be the final puzzle piece. This would be primarily something for Apple and Google to agree on. But perhaps future versions of iOS and Android could somehow store an authentication hash at source, at the moment of creation – so that it can be proven that a given photo really was taken with the iPhone camera app, and not the result of AI.

I’m not smart enough to know exactly how such a system would work, as it would be uniquely complicated and necessarily be carefully designed to maintain privacy. Perhaps hashes could somehow be sent blind to databases maintained by Apple and Google, which will effectively provide nothing more than a checksum.

Finally, a use for the blockchain

Now here’s the wild part. So important could proving the authenticity or provenance of photos and videos become that perhaps it could (whisper it) provide an actual, viable use-case for the blockchain.

This is because of the blockchain’s unique selling point – that it is an immutable, decentralised ledger, or database, that can’t be tampered with.

So, for example, the hashes of authentic (or authenticated) photos could be written to it, and checked against, and it would essentially last forever. So that even if the authenticating body disappears – say, the BBC is blown up by a future government, or Getty Images goes bankrupt – the records will remain in circulation.

The fact that the blockchain is essentially write-only means that, by definition, we can trust that its records haven’t been meddled with. We should then be able to effectively page back through the chain to check the provenance of any images listed on it.

So perhaps the real legacy of NFTs might turn out to be not garish drawing of apes – but a new way of proving authenticity across the web.

Signal and noise

Whether or not the platforms I imagine emerge or not, one thing we can say for certain is that in the future there is going to be a lot more noise. The ease of generative AI, in the hands of every bot and bullshitter online, means that finding the truth is going to become harder than ever.

But I am pleased to say that there are early signs of the industry reacting. For example, the Coalition for Content Provenance and Authenticity has already signed up Adobe, Microsoft, Intel and the BBC as members. The “Trusted Databases” hopefully can’t be far away.

Similarly, the BBC has also recently launched BBC Verify, which alongside veteran open source intelligence analysts Bellingcat is a solid foundation on which to build fact-checking credibility.

There are already companies who have built scrapers for the purpose of things such as copyright enforcement – which will use many of the same technological building blocks as the envisaged “Provenance Scrapers”.

So it is still early days, but I’m convinced: when the AI tsunami floods us with falsehoods, the truth is going to become a lot more valuable. There’s a big business opportunity for the companies that build the institutions that will maintain it.

Image by Jacquie Boyd via Ikon Images

This article has been tagged as a “Great Read“, a tag we reserve for articles we think are truly outstanding.

NEXT UP

Jeff Smith SVP of Strategic Partnerships at Skipify: “Traditional finance and banking can learn to embrace disruptors as partners and enablers instead of competitors and threats”

Jeff Smith is the SVP of Strategic Partnerships at Skipify, a San Francisco-based fintech company on a mission to redefine the checkout experience

Optus appoints Stephen Rue as new CEO

Optus appoints Stephen Rue as the new permanent CEO as well as a new governance structure for him to operate under.

Why Lenovo’s customers want sustainable technology: youth, power bills and legislation

In an exclusive interview with Lenovo’s Global Head of Environmental Services, Stefan Brechling Larsen, we ask why its customers are finally asking for sustainable technology